Most product teams tend to consider the quality of mobile apps in terms of bugs. A bug is reported, fixed, and released. What is not followed up on as closely are the effects on brand perception between the crash and the fix, and whether users will still be around by the time the patch is released.

Mobile apps occupy a different place in the brand relationship compared to websites or desktop software. They exist on a device that is always carried with you and opened dozens of times a day during important moments, such as making a payment, calling customer services, or receiving a time-sensitive alert. In this context, a technical inconvenience does not necessarily constitute a failure of quality. It is a brand experience.

The connection between the quality of mobile apps and brand loyalty is influenced by the types of failures, user behaviour, and the stage of the product lifecycle at which trust is built up or lost.

How Mobile App Quality Failures Erode Brand Trust

Building trust in a mobile product brand is a slow process, but losing it can happen quickly. Reliability is built up through many smooth interactions, but it can be challenged by a single failure at the wrong time.

The App Store Rating Problem

The most underrated brand mechanism in managing mobile products is the app store ratings. They are used as a measure of satisfaction by most teams. They are a conversion measure – a public, enduring indicator that affects the number of new users to download the app prior to their initial engagement with the product.

A release that has a major quality failure will have one-star reviews in 48 to 72 hours. It is narrow and has searchable content: crashes on payment, broke after the update, and lost all my data. These expressions are found in the search of app stores and in the reviews section that buyers and procurement teams of enterprises read prior to committing. A product that has a 3.8 rating and has been getting quality complaints recently will lose downloads to a competitor with a 4.5 rating, even when features are the same.

Recovery is slow. Even a bad release can cause a product to drop to 3.9, and it will take two or three release cycles of positive reviews to get it back to 4.4, assuming no additional quality incidents.

Device Fragmentation and Silent Failures

The fragmentation of mobile devices poses quality issues that do not directly affect web products. What works well on a modern iPhone, for example, might not work in the same way on a three-year-old Android phone with a modified operating system (OS), a mid-range phone with low RAM, or a tablet with a different screen density. This results in a mixed brand experience, where the product performs smoothly for some customers and poorly for others, purely due to the hardware they are using.

An app that looks good on existing iOS devices but cuts off vital user interface features on popular Android resolutions provides two different brand experiences to customers who are making real financial choices. Users who encounter a broken UI are unaware that they are in a second tier. All they know is that the app is not working, and this shapes their perception of the brand.

This is further compounded by silent failures. Users will no longer depend on the app if push notifications are irregularly fired. Background sync with periodic drops makes the app unreliable, though users may not complain. These failures are below the threshold for submitting a complaint – users do not report a problem when their notification is delayed. Instead, they open a rival app. Consequently, the frequency of sessions and engagement decreases over weeks in a manner that is not easily correlated with a particular release.

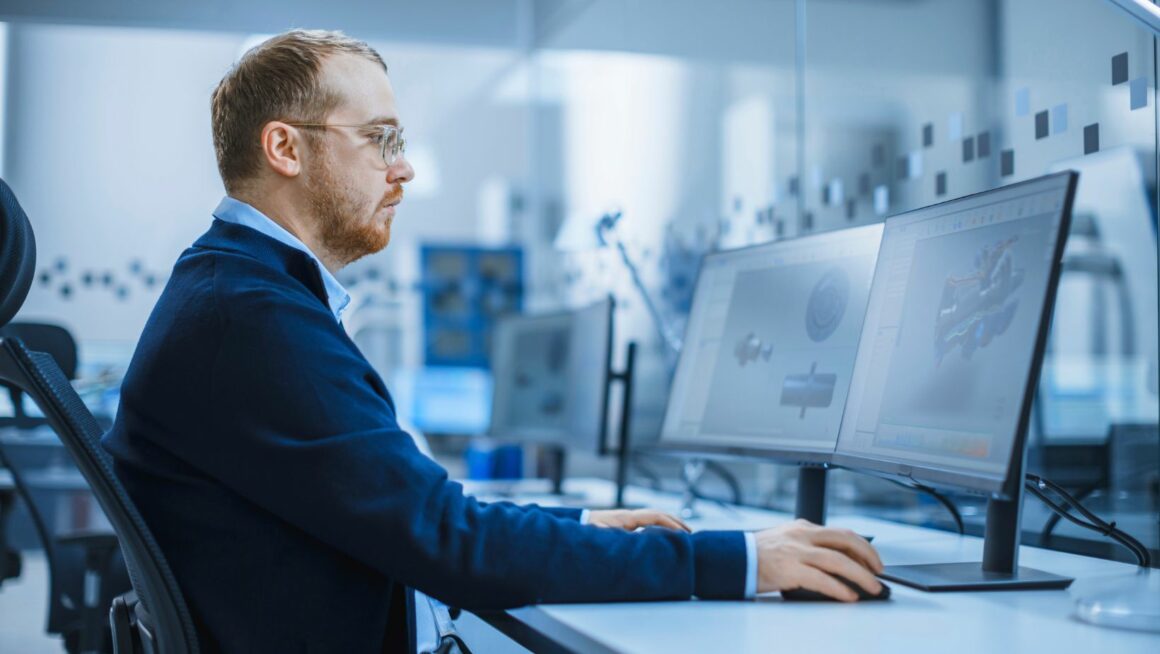

For mobile teams where internal QA hasn't kept pace with device fragmentation, bringing in QA engineers for hire with mobile specialization ensures coverage across the device and OS combinations that represent real user traffic, not just the devices the internal team happens to own.

What Mobile Testing Needs to Cover to Protect Brand Perception

The difference between bug-catching and brand-protecting mobile testing is largely one of prioritisation. The former is based on technical completeness, while the latter is based on failure modes that actually harm user trust.

The starting point is device coverage constructed based on analytics data, as opposed to device availability. App analytics and install data show exactly which hardware and OS combinations account for the top 80-90% of real user traffic. Distribution-based coverage captures the failures experienced by real users. Failures occurring on hardware not used by anyone outside the team are identified using a strategy involving convenient lab devices.

The same applies to OS version coverage. Android fragmentation means that many users are one to three major releases behind the current one. Adoption of iOS is quick, but not immediate. Testing only against the current OS versions will always fail to capture differences in behaviour that produce device-specific one-star reviews, which are the most difficult to respond to publicly.

Real-world network conditions performance testing exposes failures that are not seen in laboratory testing. An app that works well on high-speed Wi-Fi will not perform in the same way on an overloaded 4G network or in an area with a weak signal. What takes 200 ms to call an API in the lab can take 200 ms to call in the field. Users experiencing these issues do not differentiate between network and app issues – either way, the brand takes the hit.

OS update cycles are the most predictable source of brand-damaging mobile releases. Every major iOS and Android update introduces changes in behaviour, such as updates to the permission model, changes in the way background processes are handled, and differences in the way WebView renders, that break existing functionality in apps that were working correctly the week before. Teams without regression testing tied to the OS release calendar only discover these issues when users update and begin leaving negative reviews.

Alongside testing, app store review monitoring deserves a structural fix. Reviews are among the most reliable early warning systems for quality failures that internal testing has missed, yet most teams delegate review monitoring to marketing. The important signal isn't the aggregate star rating. It's the specific complaint patterns: clusters of reviews mentioning the same failure mode and spikes in one-star reviews tied to a specific release. These surface failure categories before support tickets accumulate and before the impact on ratings affects acquisition.

For teams benchmarking their mobile testing approach, a ranked index of mobile app quality assurance providers gives a useful reference for what mature, specialized coverage looks like across device strategy, OS lifecycle testing, and real-world condition validation.

Conclusion

The quality of mobile apps and brand loyalty are more closely connected than most product teams account for in their testing strategy. A drop in app store ratings after a rough release, a cohort of users who churn for no apparent reason, and an enterprise prospect who reads recent reviews before a demo are all brand outcomes driven by quality decisions made weeks earlier in the release cycle.

Teams that sustain strong ratings and mobile retention over multiple release cycles test more deliberately against real-world conditions, across their actual user base and with a clear understanding of which failure modes carry the most weight for the brand.