The generative AI landscape is moving at a breathtaking pace. Just a short while ago, the tech world was captivated by text-to-image generators that could produce stunning visuals from a few simple words. Then came the text-to-video boom, allowing us to conjure moving scenes out of thin air. However, as the initial novelty settles, a more sophisticated, practical, and highly controllable paradigm is taking over: the Video-to-Video (V2V) AI revolution.

Unlike purely prompt-based generations, which often struggle to follow precise directions, V2V takes existing reality and reshapes it entirely. It bridges the critical gap between human intent, real-world physics, and limitless digital imagination. As we enter this new era, it is clear that video-to-video technology is not just a fleeting trend—it is the foundational future of content creation.

The Need for Control and the Rise of V2V

The primary challenge with text-to-video generation has always been a lack of directorial control. You can describe a scene perfectly in a prompt, but the AI might still misinterpret the exact camera angle, the pacing of a character’s walk, or the subtle emotional nuance on an actor’s face.

Video-to-video solves this problem by using your original footage as a structural blueprint. The base video dictates the timing, the spatial relationships, and the human performance. The AI then acts as an ultra-advanced digital skin, altering the lighting, environment, and textures while strictly adhering to the original human motion.

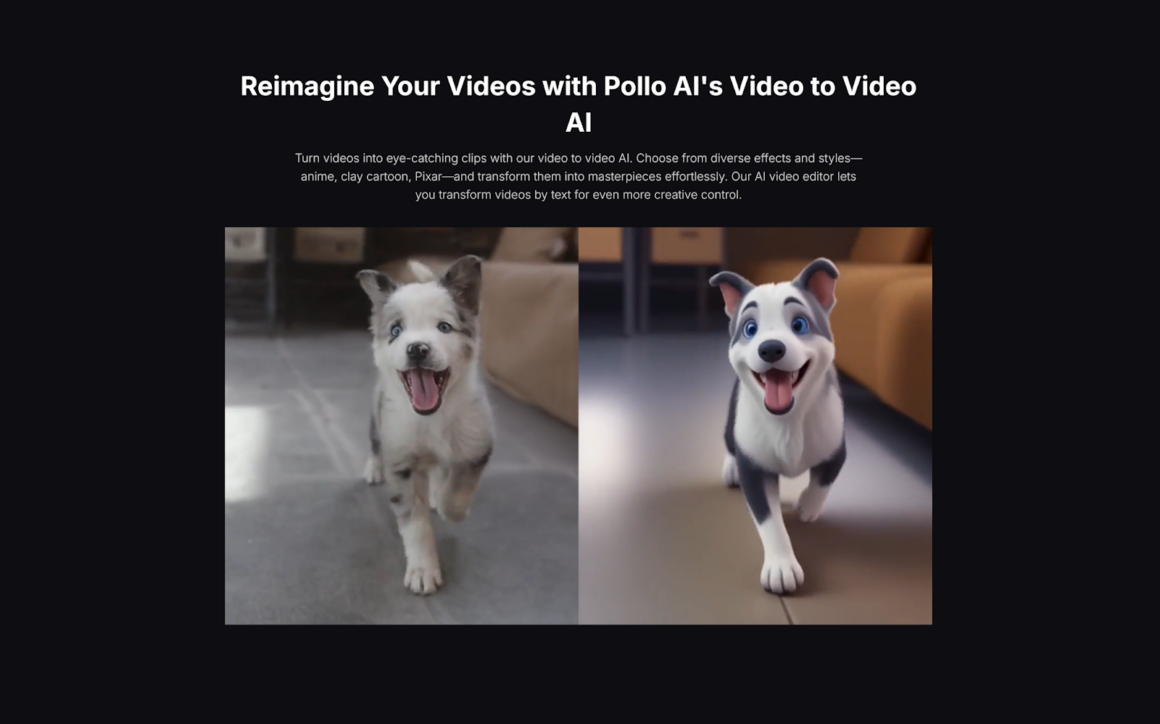

To see this revolution in action, you don’t have to look further than platforms like Pollo AI, which perfectly illustrates how accessible this tech has become. Pollo AI features a highly intuitive video to video AI that allows creators to freely change video styles—turning a simple smartphone video into a sweeping cinematic cyberpunk scene or a fluid 2D anime sequence in just a few clicks. Users can easily add filters and effects to fine-tune their visual narrative. But what truly makes it a game-changer is that Pollo AI operates as an “all-in-one agency.” Instead of forcing creators to juggle multiple subscriptions, it provides direct access to a massive library of the industry’s most sought-after AI models. Whether you need the hyper-realism of Kling AI, the dynamic camera tracking of Luma AI, or the outstanding temporal consistency of Hailuo AI, Pollo AI brings all these powerhouse models under one single, user-friendly roof.

What’s Next: The Future of Generative Media

With platforms making top-tier V2V tech so easily accessible, the barrier between imagination and execution has been permanently shattered. So, as these models evolve, what exactly is next for the world of generative media?

Here are the major shifts we can expect to see in the near future:

1. The Democratization of High-End VFX

Historically, creating sci-fi, fantasy, or historical period-piece content required a massive budget for CGI, elaborate sets, and complex costume departments. V2V AI is completely dismantling these financial barriers. An indie filmmaker can now shoot a scene in a plain garage with actors wearing basic, everyday clothing. During post-production, V2V technology can seamlessly transform that garage into the interior of a spaceship or a grand medieval dining hall. High-end visual effects will no longer be exclusive to Hollywood studios; they will belong to anyone with a vision.

2. Hyper-Personalized Marketing at Scale

For the advertising world, V2V is an unprecedented tool for scalability. Brands can shoot a single base commercial featuring an actor demonstrating a product. Using V2V, marketers can instantly alter the background environment, the actor’s wardrobe, or the overall aesthetic to appeal to completely different demographics, seasons, or cultural preferences. A sunny summer beach advertisement can be transformed into a cozy, snowy winter cabin scene with a few clicks, multiplying the return on investment of a single video shoot exponentially.

3. Real-Time Video-to-Video Processing

Currently, applying heavy V2V transformations requires rendering time on powerful cloud servers. However, the next massive leap on the horizon is real-time processing. Imagine live-streaming on Twitch or YouTube, where your webcam doesn’t just cut out the background, but actively translates your physical appearance and your entire room into a high-fidelity, stylized 3D avatar and environment in real-time. This will revolutionize virtual meetings, online gaming, and interactive entertainment, making the metaverse a practical reality.

4. The End of the “AI Flicker”

The Achilles’ heel of early AI video was temporal consistency—the annoying flickering backgrounds, morphing limbs, and shifting textures from frame to frame. The next generation of V2V models is focused squarely on fixing this. By deeply understanding 3D space, depth perception, and real-world physics, upcoming updates to models like Hailuo AI and Kling AI will ensure that an AI-generated overlay is as solid and stable as practical effects. The visual distinction between recorded reality and AI generation will become practically invisible.

Conclusion

The Video-to-Video AI revolution is fundamentally rewriting the rules of media production. We are rapidly transitioning from an era of complex, expensive CGI into an age where human imagination is the only limiting factor. As all-in-one platforms like Pollo AI continue to democratize access to industry-leading models, the power to reshape reality is being placed directly into the hands of everyday creators. The future of generative media isn’t just about typing text into a box—it’s about capturing the real world and redefining it, frame by frame, into whatever you can dream up.